mirror of

https://github.com/chidiwilliams/buzz.git

synced 2026-03-14 14:45:46 +01:00

Will validate audio before transcribing (#1364)

This commit is contained in:

parent

0d446a9964

commit

a94d8fbd0d

2 changed files with 58 additions and 29 deletions

16

README.md

16

README.md

|

|

@ -13,7 +13,7 @@ OpenAI's [Whisper](https://github.com/openai/whisper).

|

|||

|

||||

[](https://GitHub.com/chidiwilliams/buzz/releases/)

|

||||

|

||||

|

||||

|

||||

|

||||

## Features

|

||||

- Transcribe audio and video files or Youtube links

|

||||

|

|

@ -91,12 +91,12 @@ For info on how to get latest development version with latest features and bug f

|

|||

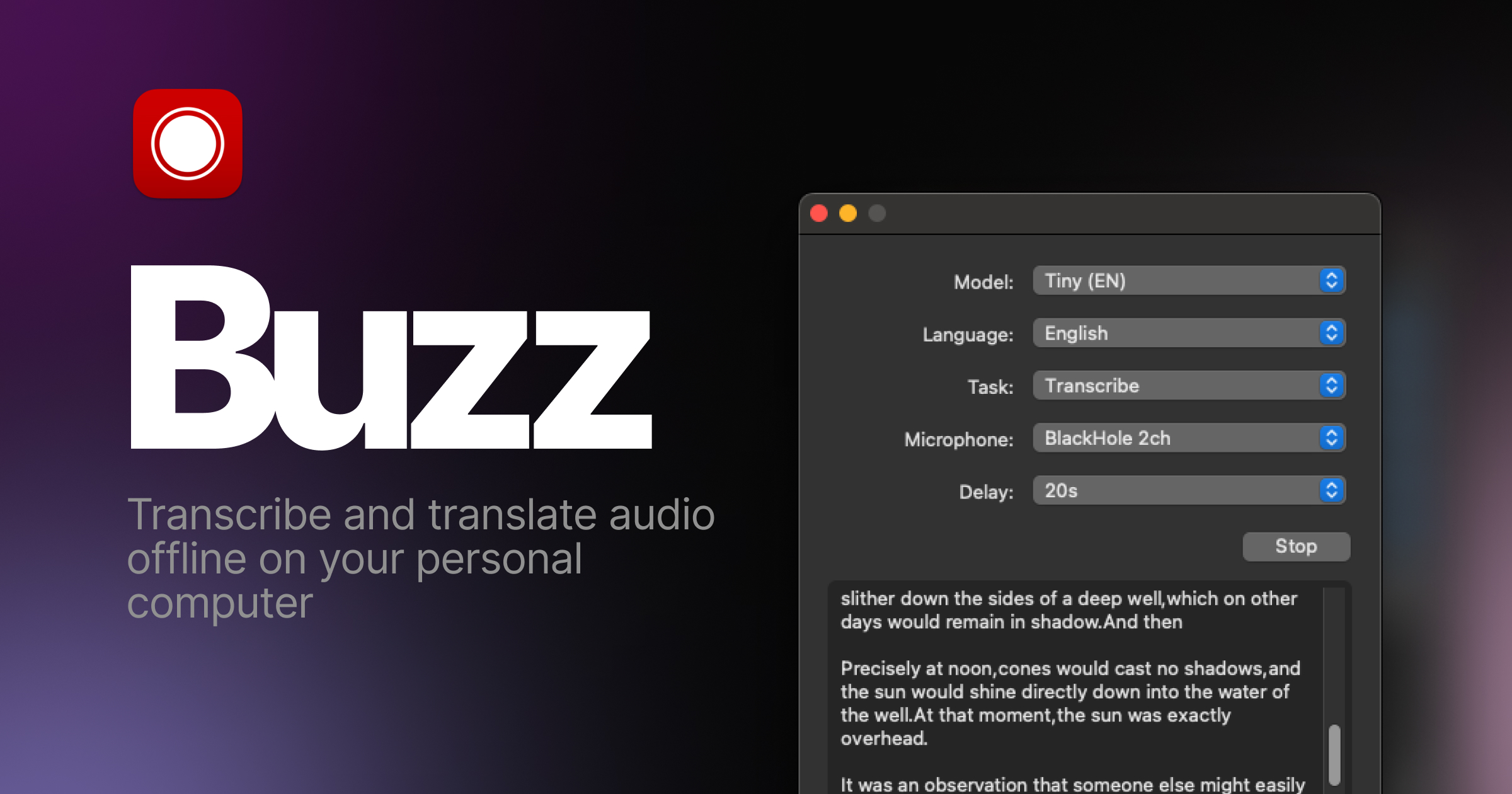

### Screenshots

|

||||

|

||||

<div style="display: flex; flex-wrap: wrap;">

|

||||

<img alt="File import" src="share/screenshots/buzz-1-import.png" style="max-width: 18%; margin-right: 1%;" />

|

||||

<img alt="Main screen" src="share/screenshots/buzz-2-main_screen.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Preferences" src="share/screenshots/buzz-3-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Model preferences" src="share/screenshots/buzz-3.2-model-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Transcript" src="share/screenshots/buzz-4-transcript.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Live recording" src="share/screenshots/buzz-5-live_recording.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Resize" src="share/screenshots/buzz-6-resize.png" style="max-width: 18%;" />

|

||||

<img alt="File import" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-1-import.png" style="max-width: 18%; margin-right: 1%;" />

|

||||

<img alt="Main screen" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-2-main_screen.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Preferences" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-3-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Model preferences" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-3.2-model-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Transcript" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-4-transcript.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Live recording" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-5-live_recording.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||

<img alt="Resize" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-6-resize.png" style="max-width: 18%;" />

|

||||

</div>

|

||||

|

||||

|

|

|

|||

|

|

@ -28,6 +28,7 @@ from buzz.transcriber.file_transcriber import FileTranscriber

|

|||

from buzz.transcriber.transcriber import FileTranscriptionTask, Segment, Task

|

||||

from buzz.transcriber.whisper_cpp import WhisperCpp

|

||||

|

||||

import av

|

||||

import faster_whisper

|

||||

import whisper

|

||||

import stable_whisper

|

||||

|

|

@ -36,6 +37,22 @@ from stable_whisper import WhisperResult

|

|||

PROGRESS_REGEX = re.compile(r"\d+(\.\d+)?%")

|

||||

|

||||

|

||||

def check_file_has_audio_stream(file_path: str) -> None:

|

||||

"""Check if a media file has at least one audio stream.

|

||||

|

||||

Raises:

|

||||

ValueError: If the file has no audio streams.

|

||||

"""

|

||||

try:

|

||||

with av.open(file_path) as container:

|

||||

if len(container.streams.audio) == 0:

|

||||

raise ValueError("No audio streams found")

|

||||

except av.error.InvalidDataError as e:

|

||||

raise ValueError(f"Invalid media file: {e}")

|

||||

except av.error.FileNotFoundError:

|

||||

raise ValueError("File not found")

|

||||

|

||||

|

||||

class WhisperFileTranscriber(FileTranscriber):

|

||||

"""WhisperFileTranscriber transcribes an audio file to text, writes the text to a file, and then opens the file

|

||||

using the default program for opening txt files."""

|

||||

|

|

@ -54,6 +71,7 @@ class WhisperFileTranscriber(FileTranscriber):

|

|||

self.stopped = False

|

||||

self.recv_pipe = None

|

||||

self.send_pipe = None

|

||||

self.error_message = None

|

||||

|

||||

def transcribe(self) -> List[Segment]:

|

||||

time_started = datetime.datetime.now()

|

||||

|

|

@ -119,7 +137,7 @@ class WhisperFileTranscriber(FileTranscriber):

|

|||

logging.debug("Whisper process was terminated (exit code: %s), treating as cancellation", self.current_process.exitcode)

|

||||

raise Exception("Transcription was canceled")

|

||||

else:

|

||||

raise Exception("Unknown error")

|

||||

raise Exception(self.error_message or "Unknown error")

|

||||

|

||||

return self.segments

|

||||

|

||||

|

|

@ -158,27 +176,36 @@ class WhisperFileTranscriber(FileTranscriber):

|

|||

subprocess.run = _patched_run

|

||||

subprocess.Popen = _PatchedPopen

|

||||

|

||||

with pipe_stderr(stderr_conn):

|

||||

if task.transcription_options.model.model_type == ModelType.WHISPER_CPP:

|

||||

segments = cls.transcribe_whisper_cpp(task)

|

||||

elif task.transcription_options.model.model_type == ModelType.HUGGING_FACE:

|

||||

sys.stderr.write("0%\n")

|

||||

segments = cls.transcribe_hugging_face(task)

|

||||

sys.stderr.write("100%\n")

|

||||

elif (

|

||||

task.transcription_options.model.model_type == ModelType.FASTER_WHISPER

|

||||

):

|

||||

segments = cls.transcribe_faster_whisper(task)

|

||||

elif task.transcription_options.model.model_type == ModelType.WHISPER:

|

||||

segments = cls.transcribe_openai_whisper(task)

|

||||

else:

|

||||

raise Exception(

|

||||

f"Invalid model type: {task.transcription_options.model.model_type}"

|

||||

)

|

||||

try:

|

||||

# Check if the file has audio streams before processing

|

||||

check_file_has_audio_stream(task.file_path)

|

||||

|

||||

segments_json = json.dumps(segments, ensure_ascii=True, default=vars)

|

||||

sys.stderr.write(f"segments = {segments_json}\n")

|

||||

sys.stderr.write(WhisperFileTranscriber.READ_LINE_THREAD_STOP_TOKEN + "\n")

|

||||

with pipe_stderr(stderr_conn):

|

||||

if task.transcription_options.model.model_type == ModelType.WHISPER_CPP:

|

||||

segments = cls.transcribe_whisper_cpp(task)

|

||||

elif task.transcription_options.model.model_type == ModelType.HUGGING_FACE:

|

||||

sys.stderr.write("0%\n")

|

||||

segments = cls.transcribe_hugging_face(task)

|

||||

sys.stderr.write("100%\n")

|

||||

elif (

|

||||

task.transcription_options.model.model_type == ModelType.FASTER_WHISPER

|

||||

):

|

||||

segments = cls.transcribe_faster_whisper(task)

|

||||

elif task.transcription_options.model.model_type == ModelType.WHISPER:

|

||||

segments = cls.transcribe_openai_whisper(task)

|

||||

else:

|

||||

raise Exception(

|

||||

f"Invalid model type: {task.transcription_options.model.model_type}"

|

||||

)

|

||||

|

||||

segments_json = json.dumps(segments, ensure_ascii=True, default=vars)

|

||||

sys.stderr.write(f"segments = {segments_json}\n")

|

||||

sys.stderr.write(WhisperFileTranscriber.READ_LINE_THREAD_STOP_TOKEN + "\n")

|

||||

except Exception as e:

|

||||

# Send error message back to the parent process

|

||||

stderr_conn.send(f"error = {str(e)}\n")

|

||||

stderr_conn.send(WhisperFileTranscriber.READ_LINE_THREAD_STOP_TOKEN + "\n")

|

||||

raise

|

||||

|

||||

@classmethod

|

||||

def transcribe_whisper_cpp(cls, task: FileTranscriptionTask) -> List[Segment]:

|

||||

|

|

@ -415,6 +442,8 @@ class WhisperFileTranscriber(FileTranscriber):

|

|||

for segment in segments_dict

|

||||

]

|

||||

self.segments = segments

|

||||

elif line.startswith("error = "):

|

||||

self.error_message = line[8:]

|

||||

else:

|

||||

try:

|

||||

match = PROGRESS_REGEX.search(line)

|

||||

|

|

|

|||

Loading…

Add table

Add a link

Reference in a new issue