mirror of

https://github.com/chidiwilliams/buzz.git

synced 2026-03-14 22:55:46 +01:00

Compare commits

73 commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

1346c68c72 |

||

|

|

36f2d41557 |

||

|

|

14cacf6acf |

||

|

|

c9db73722e |

||

|

|

04c07c6cae |

||

|

|

981dd3a758 |

||

|

|

7f2bf348b6 |

||

|

|

a881a70a6f |

||

|

|

187d15b8e8 |

||

|

|

3869ac08db |

||

|

|

f545a84ba6 |

||

|

|

ff1f521a6a |

||

|

|

b2f98f139e |

||

|

|

0f77deb17b |

||

|

|

4c9b249c50 |

||

|

|

bb546acbf9 |

||

|

|

ca8b7876fd |

||

|

|

795da67f20 |

||

|

|

749d9e6e4d |

||

|

|

125e924613 |

||

|

|

156ec35246 |

||

|

|

c4d7971e04 |

||

|

|

37f5628c49 |

||

|

|

7f14fbe576 |

||

|

|

a94d8fbd0d |

||

|

|

0d446a9964 |

||

|

|

6f6bc53c54 |

||

|

|

7594763154 |

||

|

|

b14cf0e386 |

||

|

|

97b1619902 |

||

|

|

92fc405c4a |

||

|

|

08ae8ba43f |

||

|

|

e9502881fc |

||

|

|

dc27281e34 |

||

|

|

f1bc725e2b |

||

|

|

43214f5c3d |

||

|

|

85d70c1e64 |

||

|

|

b0a53b4c2f |

||

|

|

6f075da3d3 |

||

|

|

7099dcd9f1 |

||

|

|

b4d73f62e0 |

||

|

|

6e54b5cb02 |

||

|

|

47ddc1461c |

||

|

|

665d21b391 |

||

|

|

734bd99d17 |

||

|

|

c93d8c9d03 |

||

|

|

de2a5b88ee |

||

|

|

4dbde2b948 |

||

|

|

7af79b6bc3 |

||

|

|

ebcd42c8eb |

||

|

|

b666a6a099 |

||

|

|

dc0dc6b3d2 |

||

|

|

463121bb4b |

||

|

|

9d8ee2112d |

||

|

|

20ed2be44c |

||

|

|

1c146631c9 |

||

|

|

11e59dba2b |

||

|

|

76b8e52fe5 |

||

|

|

5eea1fe721 |

||

|

|

454a03bb59 |

||

|

|

97408c6a98 |

||

|

|

73376a63ac |

||

|

|

cabbd487f9 |

||

|

|

252db3c3ed |

||

|

|

f3765a586f |

||

|

|

5a81c715d1 |

||

|

|

de1ed90f50 |

||

|

|

93559530ab |

||

|

|

629fa9f1f7 |

||

|

|

070d9f17d5 |

||

|

|

ccdeb09ac9 |

||

|

|

79d8aadf2f |

||

|

|

10e74edf89 |

175 changed files with 30163 additions and 8192 deletions

12

.coveragerc

12

.coveragerc

|

|

@ -1,9 +1,19 @@

|

||||||

[run]

|

[run]

|

||||||

omit =

|

omit =

|

||||||

buzz/whisper_cpp/*

|

buzz/whisper_cpp/*

|

||||||

|

buzz/transcriber/local_whisper_cpp_server_transcriber.py

|

||||||

*_test.py

|

*_test.py

|

||||||

demucs/*

|

demucs/*

|

||||||

buzz/transcriber/local_whisper_cpp_server_transcriber.py

|

whisper_diarization/*

|

||||||

|

deepmultilingualpunctuation/*

|

||||||

|

ctc_forced_aligner/*

|

||||||

|

|

||||||

|

[report]

|

||||||

|

exclude_also =

|

||||||

|

if sys.platform == "win32":

|

||||||

|

if platform.system\(\) == "Windows":

|

||||||

|

if platform.system\(\) == "Linux":

|

||||||

|

if platform.system\(\) == "Darwin":

|

||||||

|

|

||||||

[html]

|

[html]

|

||||||

directory = coverage/html

|

directory = coverage/html

|

||||||

|

|

|

||||||

102

.github/workflows/ci.yml

vendored

102

.github/workflows/ci.yml

vendored

|

|

@ -59,21 +59,10 @@ jobs:

|

||||||

path: .venv

|

path: .venv

|

||||||

key: venv-${{ runner.os }}-${{ runner.arch }}-${{ hashFiles('**/uv.lock') }}

|

key: venv-${{ runner.os }}-${{ runner.arch }}-${{ hashFiles('**/uv.lock') }}

|

||||||

|

|

||||||

- name: Load cached Whisper models

|

- uses: AnimMouse/setup-ffmpeg@v1

|

||||||

id: cached-whisper-models

|

|

||||||

uses: actions/cache@v4

|

|

||||||

with:

|

|

||||||

path: |

|

|

||||||

~/Library/Caches/Buzz

|

|

||||||

~/.cache/whisper

|

|

||||||

~/.cache/huggingface

|

|

||||||

~/AppData/Local/Buzz/Buzz/Cache

|

|

||||||

key: whisper-models

|

|

||||||

|

|

||||||

- uses: AnimMouse/setup-ffmpeg@v1.2.1

|

|

||||||

id: setup-ffmpeg

|

id: setup-ffmpeg

|

||||||

with:

|

with:

|

||||||

version: ${{ matrix.os == 'macos-15-intel' && '7.1.1' || matrix.os == 'macos-latest' && '71' || '7.1' }}

|

version: ${{ matrix.os == 'macos-15-intel' && '7.1.1' || matrix.os == 'macos-latest' && '80' || '8.0' }}

|

||||||

|

|

||||||

- name: Test ffmpeg

|

- name: Test ffmpeg

|

||||||

run: ffmpeg -i ./testdata/audio-long.mp3 ./testdata/audio-long.wav

|

run: ffmpeg -i ./testdata/audio-long.mp3 ./testdata/audio-long.wav

|

||||||

|

|

@ -88,7 +77,13 @@ jobs:

|

||||||

|

|

||||||

if [ "$(lsb_release -rs)" == "22.04" ]; then

|

if [ "$(lsb_release -rs)" == "22.04" ]; then

|

||||||

sudo apt-get install libegl1-mesa

|

sudo apt-get install libegl1-mesa

|

||||||

|

|

||||||

|

# Add ubuntu-toolchain-r PPA for newer libstdc++6 with GLIBCXX_3.4.32

|

||||||

|

sudo add-apt-repository ppa:ubuntu-toolchain-r/test -y

|

||||||

|

sudo apt-get update

|

||||||

|

sudo apt-get install -y libstdc++6

|

||||||

fi

|

fi

|

||||||

|

|

||||||

sudo apt-get install libyaml-dev libxkbcommon-x11-0 libxcb-icccm4 libxcb-image0 libxcb-keysyms1 libxcb-randr0 libxcb-render-util0 libxcb-xinerama0 libxcb-shape0 libxcb-cursor0 libportaudio2 gettext libpulse0 libgl1-mesa-dev libvulkan-dev ccache

|

sudo apt-get install libyaml-dev libxkbcommon-x11-0 libxcb-icccm4 libxcb-image0 libxcb-keysyms1 libxcb-randr0 libxcb-render-util0 libxcb-xinerama0 libxcb-shape0 libxcb-cursor0 libportaudio2 gettext libpulse0 libgl1-mesa-dev libvulkan-dev ccache

|

||||||

if: "startsWith(matrix.os, 'ubuntu-')"

|

if: "startsWith(matrix.os, 'ubuntu-')"

|

||||||

|

|

||||||

|

|

@ -99,6 +94,8 @@ jobs:

|

||||||

run: |

|

run: |

|

||||||

uv run make test

|

uv run make test

|

||||||

shell: bash

|

shell: bash

|

||||||

|

env:

|

||||||

|

PYTHONFAULTHANDLER: "1"

|

||||||

|

|

||||||

- name: Upload coverage reports to Codecov with GitHub Action

|

- name: Upload coverage reports to Codecov with GitHub Action

|

||||||

uses: codecov/codecov-action@v4

|

uses: codecov/codecov-action@v4

|

||||||

|

|

@ -110,7 +107,7 @@ jobs:

|

||||||

|

|

||||||

build:

|

build:

|

||||||

runs-on: ${{ matrix.os }}

|

runs-on: ${{ matrix.os }}

|

||||||

timeout-minutes: 60

|

timeout-minutes: 90

|

||||||

env:

|

env:

|

||||||

BUZZ_DISABLE_TELEMETRY: true

|

BUZZ_DISABLE_TELEMETRY: true

|

||||||

strategy:

|

strategy:

|

||||||

|

|

@ -166,22 +163,30 @@ jobs:

|

||||||

|

|

||||||

if [ "$(lsb_release -rs)" == "22.04" ]; then

|

if [ "$(lsb_release -rs)" == "22.04" ]; then

|

||||||

sudo apt-get install libegl1-mesa

|

sudo apt-get install libegl1-mesa

|

||||||

|

|

||||||

|

# Add ubuntu-toolchain-r PPA for newer libstdc++6 with GLIBCXX_3.4.32

|

||||||

|

sudo add-apt-repository ppa:ubuntu-toolchain-r/test -y

|

||||||

|

sudo apt-get update

|

||||||

|

sudo apt-get install -y libstdc++6

|

||||||

fi

|

fi

|

||||||

|

|

||||||

sudo apt-get install libyaml-dev libxkbcommon-x11-0 libxcb-icccm4 libxcb-image0 libxcb-keysyms1 libxcb-randr0 libxcb-render-util0 libxcb-xinerama0 libxcb-shape0 libxcb-cursor0 libportaudio2 gettext libpulse0 libgl1-mesa-dev libvulkan-dev ccache

|

sudo apt-get install libyaml-dev libxkbcommon-x11-0 libxcb-icccm4 libxcb-image0 libxcb-keysyms1 libxcb-randr0 libxcb-render-util0 libxcb-xinerama0 libxcb-shape0 libxcb-cursor0 libportaudio2 gettext libpulse0 libgl1-mesa-dev libvulkan-dev ccache

|

||||||

if: "startsWith(matrix.os, 'ubuntu-')"

|

if: "startsWith(matrix.os, 'ubuntu-')"

|

||||||

|

|

||||||

- name: Install dependencies

|

- name: Install dependencies

|

||||||

run: uv sync

|

run: uv sync

|

||||||

|

|

||||||

- uses: AnimMouse/setup-ffmpeg@v1.2.1

|

- uses: AnimMouse/setup-ffmpeg@v1

|

||||||

id: setup-ffmpeg

|

id: setup-ffmpeg

|

||||||

with:

|

with:

|

||||||

version: ${{ matrix.os == 'macos-15-intel' && '7.1.1' || matrix.os == 'macos-latest' && '71' || '7.1' }}

|

version: ${{ matrix.os == 'macos-15-intel' && '7.1.1' || matrix.os == 'macos-latest' && '80' || '8.0' }}

|

||||||

|

|

||||||

- name: Install MSVC for Windows

|

- name: Install MSVC for Windows

|

||||||

run: |

|

run: |

|

||||||

if [ "$RUNNER_OS" == "Windows" ]; then

|

if [ "$RUNNER_OS" == "Windows" ]; then

|

||||||

uv add msvc-runtime

|

uv add msvc-runtime

|

||||||

|

uv pip install -U torch==2.8.0+cu129 torchaudio==2.8.0+cu129 --index-url https://download.pytorch.org/whl/cu129

|

||||||

|

uv pip install nvidia-cublas-cu12==12.9.1.4 nvidia-cuda-cupti-cu12==12.9.79 nvidia-cuda-runtime-cu12==12.9.79 --extra-index-url https://pypi.ngc.nvidia.com

|

||||||

|

|

||||||

uv cache clean

|

uv cache clean

|

||||||

uv run pip cache purge

|

uv run pip cache purge

|

||||||

|

|

@ -357,32 +362,41 @@ jobs:

|

||||||

with:

|

with:

|

||||||

files: |

|

files: |

|

||||||

Buzz*-unix.tar.gz

|

Buzz*-unix.tar.gz

|

||||||

Buzz*-windows.exe

|

Buzz*.exe

|

||||||

Buzz*-mac.dmg

|

Buzz*.bin

|

||||||

|

Buzz*.dmg

|

||||||

|

|

||||||

deploy_brew_cask:

|

# Brew Cask deployment fails and the app is deprecated on Brew.

|

||||||

runs-on: macos-latest

|

# deploy_brew_cask:

|

||||||

env:

|

# runs-on: macos-latest

|

||||||

BUZZ_DISABLE_TELEMETRY: true

|

# env:

|

||||||

needs: [release]

|

# BUZZ_DISABLE_TELEMETRY: true

|

||||||

if: startsWith(github.ref, 'refs/tags/')

|

# needs: [release]

|

||||||

steps:

|

# if: startsWith(github.ref, 'refs/tags/')

|

||||||

- uses: actions/checkout@v4

|

# steps:

|

||||||

with:

|

# - uses: actions/checkout@v4

|

||||||

submodules: recursive

|

# with:

|

||||||

|

# submodules: recursive

|

||||||

- name: Install uv

|

#

|

||||||

uses: astral-sh/setup-uv@v6

|

# # Should be removed with next update to whisper.cpp

|

||||||

|

# - name: Downgrade Xcode

|

||||||

- name: Set up Python

|

# uses: maxim-lobanov/setup-xcode@v1

|

||||||

uses: actions/setup-python@v5

|

# with:

|

||||||

with:

|

# xcode-version: '16.0.0'

|

||||||

python-version: "3.12"

|

# if: matrix.os == 'macos-latest'

|

||||||

|

#

|

||||||

- name: Install dependencies

|

# - name: Install uv

|

||||||

run: uv sync

|

# uses: astral-sh/setup-uv@v6

|

||||||

|

#

|

||||||

- name: Upload to Brew

|

# - name: Set up Python

|

||||||

run: uv run make upload_brew

|

# uses: actions/setup-python@v5

|

||||||

env:

|

# with:

|

||||||

HOMEBREW_GITHUB_API_TOKEN: ${{ secrets.HOMEBREW_GITHUB_API_TOKEN }}

|

# python-version: "3.12"

|

||||||

|

#

|

||||||

|

# - name: Install dependencies

|

||||||

|

# run: uv sync

|

||||||

|

#

|

||||||

|

# - name: Upload to Brew

|

||||||

|

# run: uv run make upload_brew

|

||||||

|

# env:

|

||||||

|

# HOMEBREW_GITHUB_API_TOKEN: ${{ secrets.HOMEBREW_GITHUB_API_TOKEN }}

|

||||||

|

|

|

||||||

60

.github/workflows/snapcraft.yml

vendored

60

.github/workflows/snapcraft.yml

vendored

|

|

@ -14,28 +14,58 @@ concurrency:

|

||||||

|

|

||||||

jobs:

|

jobs:

|

||||||

build:

|

build:

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-24.04

|

||||||

|

timeout-minutes: 90

|

||||||

|

env:

|

||||||

|

BUZZ_DISABLE_TELEMETRY: true

|

||||||

outputs:

|

outputs:

|

||||||

snap: ${{ steps.snapcraft.outputs.snap }}

|

snap: ${{ steps.snapcraft.outputs.snap }}

|

||||||

steps:

|

steps:

|

||||||

- name: Maximize build space

|

# Ideas from https://github.com/orgs/community/discussions/25678

|

||||||

uses: easimon/maximize-build-space@master

|

- name: Remove unused build tools

|

||||||

with:

|

run: |

|

||||||

root-reserve-mb: 26000

|

sudo apt-get remove -y azure-cli google-cloud-sdk hhvm google-chrome-stable firefox powershell mono-devel || true

|

||||||

swap-size-mb: 1024

|

sudo apt-get autoremove -y

|

||||||

remove-dotnet: 'true'

|

sudo apt-get clean

|

||||||

remove-android: 'true'

|

python -m pip cache purge

|

||||||

remove-haskell: 'true'

|

rm -rf /opt/hostedtoolcache || true

|

||||||

remove-codeql: 'true'

|

- name: Check available disk space

|

||||||

remove-docker-images: 'true'

|

run: |

|

||||||

|

echo "=== Disk space ==="

|

||||||

|

df -h

|

||||||

|

echo "=== Memory ==="

|

||||||

|

free -h

|

||||||

- uses: actions/checkout@v4

|

- uses: actions/checkout@v4

|

||||||

with:

|

with:

|

||||||

submodules: recursive

|

submodules: recursive

|

||||||

- uses: snapcore/action-build@v1.3.0

|

- name: Install Snapcraft and dependencies

|

||||||

|

run: |

|

||||||

|

set -x

|

||||||

|

# Ensure snapd is ready

|

||||||

|

sudo systemctl start snapd.socket

|

||||||

|

sudo snap wait system seed.loaded

|

||||||

|

|

||||||

|

echo "=== Installing snapcraft ==="

|

||||||

|

sudo snap install --classic snapcraft

|

||||||

|

|

||||||

|

echo "=== Installing gnome extension dependencies ==="

|

||||||

|

sudo snap install gnome-46-2404 || { echo "Failed to install gnome-46-2404"; sudo journalctl -u snapd --no-pager -n 50; exit 1; }

|

||||||

|

sudo snap install gnome-46-2404-sdk || { echo "Failed to install gnome-46-2404-sdk"; sudo journalctl -u snapd --no-pager -n 50; exit 1; }

|

||||||

|

|

||||||

|

echo "=== Installing build-snaps ==="

|

||||||

|

sudo snap install --classic astral-uv || { echo "Failed to install astral-uv"; sudo journalctl -u snapd --no-pager -n 50; exit 1; }

|

||||||

|

|

||||||

|

echo "=== Installed snaps ==="

|

||||||

|

snap list

|

||||||

|

- name: Check disk space before build

|

||||||

|

run: df -h

|

||||||

|

- name: Build snap

|

||||||

id: snapcraft

|

id: snapcraft

|

||||||

- run: |

|

env:

|

||||||

sudo apt-get update

|

SNAPCRAFT_BUILD_ENVIRONMENT: host

|

||||||

sudo apt-get install libportaudio2 libtbb-dev

|

run: |

|

||||||

|

sudo -E snapcraft pack --verbose --destructive-mode

|

||||||

|

echo "snap=$(ls *.snap)" >> $GITHUB_OUTPUT

|

||||||

- run: sudo snap install --devmode *.snap

|

- run: sudo snap install --devmode *.snap

|

||||||

- run: |

|

- run: |

|

||||||

cd $HOME

|

cd $HOME

|

||||||

|

|

|

||||||

4

.gitignore

vendored

4

.gitignore

vendored

|

|

@ -11,6 +11,7 @@ coverage.xml

|

||||||

.idea/

|

.idea/

|

||||||

.venv/

|

.venv/

|

||||||

venv/

|

venv/

|

||||||

|

.claude/

|

||||||

|

|

||||||

# whisper_cpp

|

# whisper_cpp

|

||||||

whisper_cpp

|

whisper_cpp

|

||||||

|

|

@ -31,4 +32,5 @@ benchmarks.json

|

||||||

/coverage/

|

/coverage/

|

||||||

/wheelhouse/

|

/wheelhouse/

|

||||||

/.flatpak-builder

|

/.flatpak-builder

|

||||||

/repo

|

/repo

|

||||||

|

/nemo_msdd_configs

|

||||||

|

|

|

||||||

12

.gitmodules

vendored

12

.gitmodules

vendored

|

|

@ -1,3 +1,15 @@

|

||||||

[submodule "whisper.cpp"]

|

[submodule "whisper.cpp"]

|

||||||

path = whisper.cpp

|

path = whisper.cpp

|

||||||

url = https://github.com/ggerganov/whisper.cpp

|

url = https://github.com/ggerganov/whisper.cpp

|

||||||

|

[submodule "whisper_diarization"]

|

||||||

|

path = whisper_diarization

|

||||||

|

url = https://github.com/MahmoudAshraf97/whisper-diarization

|

||||||

|

[submodule "demucs_repo"]

|

||||||

|

path = demucs_repo

|

||||||

|

url = https://github.com/MahmoudAshraf97/demucs.git

|

||||||

|

[submodule "deepmultilingualpunctuation"]

|

||||||

|

path = deepmultilingualpunctuation

|

||||||

|

url = https://github.com/oliverguhr/deepmultilingualpunctuation.git

|

||||||

|

[submodule "ctc_forced_aligner"]

|

||||||

|

path = ctc_forced_aligner

|

||||||

|

url = https://github.com/MahmoudAshraf97/ctc-forced-aligner.git

|

||||||

|

|

|

||||||

39

Buzz.spec

39

Buzz.spec

|

|

@ -13,7 +13,6 @@ datas += collect_data_files("torch")

|

||||||

datas += collect_data_files("demucs")

|

datas += collect_data_files("demucs")

|

||||||

datas += copy_metadata("tqdm")

|

datas += copy_metadata("tqdm")

|

||||||

datas += copy_metadata("torch")

|

datas += copy_metadata("torch")

|

||||||

datas += copy_metadata("demucs")

|

|

||||||

datas += copy_metadata("regex")

|

datas += copy_metadata("regex")

|

||||||

datas += copy_metadata("requests")

|

datas += copy_metadata("requests")

|

||||||

datas += copy_metadata("packaging")

|

datas += copy_metadata("packaging")

|

||||||

|

|

@ -23,6 +22,19 @@ datas += copy_metadata("tokenizers")

|

||||||

datas += copy_metadata("huggingface-hub")

|

datas += copy_metadata("huggingface-hub")

|

||||||

datas += copy_metadata("safetensors")

|

datas += copy_metadata("safetensors")

|

||||||

datas += copy_metadata("pyyaml")

|

datas += copy_metadata("pyyaml")

|

||||||

|

datas += copy_metadata("julius")

|

||||||

|

datas += copy_metadata("openunmix")

|

||||||

|

datas += copy_metadata("lameenc")

|

||||||

|

datas += copy_metadata("diffq")

|

||||||

|

datas += copy_metadata("einops")

|

||||||

|

datas += copy_metadata("hydra-core")

|

||||||

|

datas += copy_metadata("hydra-colorlog")

|

||||||

|

datas += copy_metadata("museval")

|

||||||

|

datas += copy_metadata("submitit")

|

||||||

|

datas += copy_metadata("treetable")

|

||||||

|

datas += copy_metadata("soundfile")

|

||||||

|

datas += copy_metadata("dora-search")

|

||||||

|

datas += copy_metadata("lhotse")

|

||||||

|

|

||||||

# Allow transformers package to load __init__.py file dynamically:

|

# Allow transformers package to load __init__.py file dynamically:

|

||||||

# https://github.com/chidiwilliams/buzz/issues/272

|

# https://github.com/chidiwilliams/buzz/issues/272

|

||||||

|

|

@ -31,7 +43,13 @@ datas += collect_data_files("transformers", include_py_files=True)

|

||||||

datas += collect_data_files("faster_whisper", include_py_files=True)

|

datas += collect_data_files("faster_whisper", include_py_files=True)

|

||||||

datas += collect_data_files("stable_whisper", include_py_files=True)

|

datas += collect_data_files("stable_whisper", include_py_files=True)

|

||||||

datas += collect_data_files("whisper")

|

datas += collect_data_files("whisper")

|

||||||

datas += [("demucs", "demucs")]

|

datas += collect_data_files("demucs", include_py_files=True)

|

||||||

|

datas += collect_data_files("whisper_diarization", include_py_files=True)

|

||||||

|

datas += collect_data_files("deepmultilingualpunctuation", include_py_files=True)

|

||||||

|

datas += collect_data_files("ctc_forced_aligner", include_py_files=True, excludes=["build"])

|

||||||

|

datas += collect_data_files("nemo", include_py_files=True)

|

||||||

|

datas += collect_data_files("lightning_fabric", include_py_files=True)

|

||||||

|

datas += collect_data_files("pytorch_lightning", include_py_files=True)

|

||||||

datas += [("buzz/assets/*", "assets")]

|

datas += [("buzz/assets/*", "assets")]

|

||||||

datas += [("buzz/locale", "locale")]

|

datas += [("buzz/locale", "locale")]

|

||||||

datas += [("buzz/schema.sql", ".")]

|

datas += [("buzz/schema.sql", ".")]

|

||||||

|

|

@ -87,7 +105,22 @@ a = Analysis(

|

||||||

pathex=[],

|

pathex=[],

|

||||||

binaries=binaries,

|

binaries=binaries,

|

||||||

datas=datas,

|

datas=datas,

|

||||||

hiddenimports=[],

|

hiddenimports=[

|

||||||

|

"dora", "dora.log",

|

||||||

|

"julius", "julius.core", "julius.resample",

|

||||||

|

"openunmix", "openunmix.filtering",

|

||||||

|

"lameenc",

|

||||||

|

"diffq",

|

||||||

|

"einops",

|

||||||

|

"hydra", "hydra.core", "hydra.core.global_hydra",

|

||||||

|

"hydra_colorlog",

|

||||||

|

"museval",

|

||||||

|

"submitit",

|

||||||

|

"treetable",

|

||||||

|

"soundfile",

|

||||||

|

"_soundfile_data",

|

||||||

|

"lhotse",

|

||||||

|

],

|

||||||

hookspath=[],

|

hookspath=[],

|

||||||

hooksconfig={},

|

hooksconfig={},

|

||||||

runtime_hooks=[],

|

runtime_hooks=[],

|

||||||

|

|

|

||||||

1

CLAUDE.md

Normal file

1

CLAUDE.md

Normal file

|

|

@ -0,0 +1 @@

|

||||||

|

- Use uv to run tests and any scripts

|

||||||

|

|

@ -28,7 +28,8 @@ What version of the Buzz are you using? On what OS? What are steps to reproduce

|

||||||

**Logs**

|

**Logs**

|

||||||

|

|

||||||

Log files contain valuable information about what the Buzz was doing before the issue occurred. You can get the logs like this:

|

Log files contain valuable information about what the Buzz was doing before the issue occurred. You can get the logs like this:

|

||||||

* Mac and Linux run the app from the terminal and check the output.

|

* Linux run the app from the terminal and check the output.

|

||||||

|

* Mac get logs from `~/Library/Logs/Buzz`.

|

||||||

* Windows paste this into the Windows Explorer address bar `%USERPROFILE%\AppData\Local\Buzz\Buzz\Logs` and check the logs file.

|

* Windows paste this into the Windows Explorer address bar `%USERPROFILE%\AppData\Local\Buzz\Buzz\Logs` and check the logs file.

|

||||||

|

|

||||||

**Test on latest version**

|

**Test on latest version**

|

||||||

|

|

@ -51,9 +52,9 @@ Linux versions get also pushed to the snap. To install latest development versio

|

||||||

sudo apt-get install --no-install-recommends libyaml-dev libtbb-dev libxkbcommon-x11-0 libxcb-icccm4 libxcb-image0 libxcb-keysyms1 libxcb-randr0 libxcb-render-util0 libxcb-xinerama0 libxcb-shape0 libxcb-cursor0 libportaudio2 gettext libpulse0 ffmpeg

|

sudo apt-get install --no-install-recommends libyaml-dev libtbb-dev libxkbcommon-x11-0 libxcb-icccm4 libxcb-image0 libxcb-keysyms1 libxcb-randr0 libxcb-render-util0 libxcb-xinerama0 libxcb-shape0 libxcb-cursor0 libportaudio2 gettext libpulse0 ffmpeg

|

||||||

```

|

```

|

||||||

On versions prior to Ubuntu 24.04 install `sudo apt-get install --no-install-recommends libegl1-mesa`

|

On versions prior to Ubuntu 24.04 install `sudo apt-get install --no-install-recommends libegl1-mesa`

|

||||||

|

|

||||||

5. Install the dependencies `uv sync`

|

5. Install the dependencies `uv sync`

|

||||||

6. Build Buzz `uv build`

|

6. Run Buzz `uv run buzz`

|

||||||

7. Run Buzz `uv run buzz`

|

|

||||||

|

|

||||||

#### Necessary dependencies for Faster Whisper on GPU

|

#### Necessary dependencies for Faster Whisper on GPU

|

||||||

|

|

||||||

|

|

@ -80,8 +81,7 @@ On versions prior to Ubuntu 24.04 install `sudo apt-get install --no-install-rec

|

||||||

3. Install uv `curl -LsSf https://astral.sh/uv/install.sh | sh` (or `brew install uv`)

|

3. Install uv `curl -LsSf https://astral.sh/uv/install.sh | sh` (or `brew install uv`)

|

||||||

4. Install system dependencies you may be missing `brew install ffmpeg`

|

4. Install system dependencies you may be missing `brew install ffmpeg`

|

||||||

5. Install the dependencies `uv sync`

|

5. Install the dependencies `uv sync`

|

||||||

6. Build Buzz `uv build`

|

6. Run Buzz `uv run buzz`

|

||||||

7. Run Buzz `uv run buzz`

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

@ -93,16 +93,18 @@ Assumes you have [Git](https://git-scm.com/downloads) and [python](https://www.p

|

||||||

```

|

```

|

||||||

Set-ExecutionPolicy Bypass -Scope Process -Force; [System.Net.ServicePointManager]::SecurityProtocol = [System.Net.ServicePointManager]::SecurityProtocol -bor 3072; iex ((New-Object System.Net.WebClient).DownloadString('https://community.chocolatey.org/install.ps1'))

|

Set-ExecutionPolicy Bypass -Scope Process -Force; [System.Net.ServicePointManager]::SecurityProtocol = [System.Net.ServicePointManager]::SecurityProtocol -bor 3072; iex ((New-Object System.Net.WebClient).DownloadString('https://community.chocolatey.org/install.ps1'))

|

||||||

```

|

```

|

||||||

2. Install the GNU make. `choco install make`

|

2. Install the build tools. `choco install make cmake`

|

||||||

3. Install the ffmpeg. `choco install ffmpeg`

|

3. Install the ffmpeg. `choco install ffmpeg`

|

||||||

4. Install [MSYS2](https://www.msys2.org/), follow [this guide](https://sajidifti.medium.com/how-to-install-gcc-and-gdb-on-windows-using-msys2-tutorial-0fceb7e66454).

|

4. Download [Build Tools for Visual Studio 2022](https://visualstudio.microsoft.com/vs/older-downloads/) and install "Desktop development with C++" workload.

|

||||||

5. Clone the repository `git clone --recursive https://github.com/chidiwilliams/buzz.git`

|

5. Add location of `namke` to your PATH environment variable. Usually it is `C:\Program Files (x86)\Microsoft Visual Studio\2022\BuildTools\VC\Tools\MSVC\14.44.35207\bin\Hostx64\x86`

|

||||||

6. Enter repo folder `cd buzz`

|

6. Install Vulkan SDK from https://vulkan.lunarg.com/sdk/home

|

||||||

7. Install uv `powershell -ExecutionPolicy ByPass -c "irm https://astral.sh/uv/install.ps1 | iex"`

|

7. Clone the repository `git clone --recursive https://github.com/chidiwilliams/buzz.git`

|

||||||

8. Install the dependencies `uv sync`

|

8. Enter repo folder `cd buzz`

|

||||||

9. `cp -r .\dll_backup\ .\buzz\`

|

9. Install uv `powershell -ExecutionPolicy ByPass -c "irm https://astral.sh/uv/install.ps1 | iex"`

|

||||||

10. Build Buzz `uv build`

|

10. Install the dependencies `uv sync`

|

||||||

11. Run Buzz `uv run buzz`

|

11. Build Whisper.cpp `uv run make buzz/whisper_cpp`

|

||||||

|

12. `cp -r .\dll_backup\ .\buzz\`

|

||||||

|

13. Run Buzz `uv run buzz`

|

||||||

|

|

||||||

Note: It should be safe to ignore any "syntax errors" you see during the build. Buzz will work. Also you can ignore any errors for FFmpeg. Buzz tries to load FFmpeg by several different means and some of them throw errors, but FFmpeg should eventually be found and work.

|

Note: It should be safe to ignore any "syntax errors" you see during the build. Buzz will work. Also you can ignore any errors for FFmpeg. Buzz tries to load FFmpeg by several different means and some of them throw errors, but FFmpeg should eventually be found and work.

|

||||||

|

|

||||||

|

|

@ -118,16 +120,4 @@ uv add --index https://pypi.ngc.nvidia.com nvidia-cublas-cu12==12.8.3.14 nvidia-

|

||||||

|

|

||||||

To use Faster Whisper on GPU, install the following libraries:

|

To use Faster Whisper on GPU, install the following libraries:

|

||||||

* [cuBLAS](https://developer.nvidia.com/cublas)

|

* [cuBLAS](https://developer.nvidia.com/cublas)

|

||||||

* [cuDNN](https://developer.nvidia.com/cudnn)

|

* [cuDNN](https://developer.nvidia.com/cudnn)

|

||||||

|

|

||||||

If you run into issues with FFmpeg, ensure ffmpeg dependencies are installed

|

|

||||||

```

|

|

||||||

pip3 uninstall ffmpeg ffmpeg-python

|

|

||||||

pip3 install ffmpeg

|

|

||||||

pip3 install ffmpeg-python

|

|

||||||

```

|

|

||||||

|

|

||||||

For Whisper.cpp you will need to install Vulkan SDK.

|

|

||||||

Follow the instructions here https://vulkan.lunarg.com/doc/sdk/latest/windows/getting_started.html

|

|

||||||

|

|

||||||

Run Buzz `python -m buzz`

|

|

||||||

56

Makefile

56

Makefile

|

|

@ -1,5 +1,5 @@

|

||||||

version := 1.3.2

|

# Change also in pyproject.toml and buzz/__version__.py

|

||||||

version_escaped := $$(echo ${version} | sed -e 's/\./\\./g')

|

version := 1.4.4

|

||||||

|

|

||||||

mac_app_path := ./dist/Buzz.app

|

mac_app_path := ./dist/Buzz.app

|

||||||

mac_zip_path := ./dist/Buzz-${version}-mac.zip

|

mac_zip_path := ./dist/Buzz-${version}-mac.zip

|

||||||

|

|

@ -23,15 +23,23 @@ ifeq ($(OS), Windows_NT)

|

||||||

-rm -rf buzz/whisper_cpp

|

-rm -rf buzz/whisper_cpp

|

||||||

-rm -rf whisper.cpp/build

|

-rm -rf whisper.cpp/build

|

||||||

-rm -rf dist/*

|

-rm -rf dist/*

|

||||||

|

-rm -rf buzz/__pycache__ buzz/**/__pycache__ buzz/**/**/__pycache__ buzz/**/**/**/__pycache__

|

||||||

|

-for /d /r buzz %%d in (__pycache__) do @if exist "%%d" rmdir /s /q "%%d"

|

||||||

else

|

else

|

||||||

rm -rf buzz/whisper_cpp || true

|

rm -rf buzz/whisper_cpp || true

|

||||||

rm -rf whisper.cpp/build || true

|

rm -rf whisper.cpp/build || true

|

||||||

rm -rf dist/* || true

|

rm -rf dist/* || true

|

||||||

|

find buzz -type d -name "__pycache__" -exec rm -rf {} + 2>/dev/null || true

|

||||||

endif

|

endif

|

||||||

|

|

||||||

COVERAGE_THRESHOLD := 75

|

COVERAGE_THRESHOLD := 70

|

||||||

|

|

||||||

test: buzz/whisper_cpp

|

test: buzz/whisper_cpp

|

||||||

|

# A check to get updates of yt-dlp. Should run only on local as part of regular development operations

|

||||||

|

# Sort of a local "update checker"

|

||||||

|

ifndef CI

|

||||||

|

uv lock --upgrade-package yt-dlp

|

||||||

|

endif

|

||||||

pytest -s -vv --cov=buzz --cov-report=xml --cov-report=html --benchmark-skip --cov-fail-under=${COVERAGE_THRESHOLD} --cov-config=.coveragerc

|

pytest -s -vv --cov=buzz --cov-report=xml --cov-report=html --benchmark-skip --cov-fail-under=${COVERAGE_THRESHOLD} --cov-config=.coveragerc

|

||||||

|

|

||||||

benchmarks: buzz/whisper_cpp

|

benchmarks: buzz/whisper_cpp

|

||||||

|

|

@ -49,30 +57,33 @@ ifeq ($(OS), Windows_NT)

|

||||||

# The _DISABLE_CONSTEXPR_MUTEX_CONSTRUCTOR is needed to prevent mutex lock issues on Windows

|

# The _DISABLE_CONSTEXPR_MUTEX_CONSTRUCTOR is needed to prevent mutex lock issues on Windows

|

||||||

# https://github.com/actions/runner-images/issues/10004#issuecomment-2156109231

|

# https://github.com/actions/runner-images/issues/10004#issuecomment-2156109231

|

||||||

# -DCMAKE_[C|CXX]_COMPILER_WORKS=TRUE is used to prevent issue in building test program that fails on CI

|

# -DCMAKE_[C|CXX]_COMPILER_WORKS=TRUE is used to prevent issue in building test program that fails on CI

|

||||||

cmake -S whisper.cpp -B whisper.cpp/build/ -DCMAKE_BUILD_TYPE=Release -DBUILD_SHARED_LIBS=OFF -DCMAKE_INSTALL_RPATH='$$ORIGIN' -DCMAKE_BUILD_WITH_INSTALL_RPATH=ON -DCMAKE_C_FLAGS="-D_DISABLE_CONSTEXPR_MUTEX_CONSTRUCTOR" -DCMAKE_CXX_FLAGS="-D_DISABLE_CONSTEXPR_MUTEX_CONSTRUCTOR" -DCMAKE_C_COMPILER_WORKS=TRUE -DCMAKE_CXX_COMPILER_WORKS=TRUE -DGGML_VULKAN=1

|

# GGML_NATIVE=OFF ensures we don't use -march=native (which would target the build machine's CPU)

|

||||||

|

cmake -S whisper.cpp -B whisper.cpp/build/ -DCMAKE_BUILD_TYPE=Release -DBUILD_SHARED_LIBS=OFF -DCMAKE_INSTALL_RPATH='$$ORIGIN' -DCMAKE_BUILD_WITH_INSTALL_RPATH=ON -DCMAKE_C_FLAGS="-D_DISABLE_CONSTEXPR_MUTEX_CONSTRUCTOR" -DCMAKE_CXX_FLAGS="-D_DISABLE_CONSTEXPR_MUTEX_CONSTRUCTOR" -DCMAKE_C_COMPILER_WORKS=TRUE -DCMAKE_CXX_COMPILER_WORKS=TRUE -DGGML_VULKAN=1 -DGGML_NATIVE=OFF

|

||||||

cmake --build whisper.cpp/build -j --config Release --verbose

|

cmake --build whisper.cpp/build -j --config Release --verbose

|

||||||

|

|

||||||

-mkdir buzz/whisper_cpp

|

-mkdir buzz/whisper_cpp

|

||||||

cp whisper.cpp/build/bin/Release/whisper-cli.exe buzz/whisper_cpp/

|

cp whisper.cpp/build/bin/Release/whisper-cli.exe buzz/whisper_cpp/

|

||||||

cp whisper.cpp/build/bin/Release/whisper-server.exe buzz/whisper_cpp/

|

cp whisper.cpp/build/bin/Release/whisper-server.exe buzz/whisper_cpp/

|

||||||

cp dll_backup/SDL2.dll buzz/whisper_cpp

|

cp dll_backup/SDL2.dll buzz/whisper_cpp

|

||||||

|

PowerShell -NoProfile -ExecutionPolicy Bypass -Command "if (-not (Test-Path 'buzz\whisper_cpp\ggml-silero-v6.2.0.bin')) { Start-BitsTransfer -Source https://huggingface.co/ggml-org/whisper-vad/resolve/main/ggml-silero-v6.2.0.bin -Destination 'buzz\whisper_cpp\ggml-silero-v6.2.0.bin' }"

|

||||||

endif

|

endif

|

||||||

|

|

||||||

ifeq ($(shell uname -s), Linux)

|

ifeq ($(shell uname -s), Linux)

|

||||||

# Build Whisper with Vulkan support

|

# Build Whisper with Vulkan support

|

||||||

|

# GGML_NATIVE=OFF ensures we don't use -march=native (which would target the build machine's CPU)

|

||||||

|

# This enables portable SSE4.2/AVX/AVX2 optimizations that work on most x86_64 CPUs

|

||||||

rm -rf whisper.cpp/build || true

|

rm -rf whisper.cpp/build || true

|

||||||

-mkdir -p buzz/whisper_cpp

|

-mkdir -p buzz/whisper_cpp

|

||||||

cmake -S whisper.cpp -B whisper.cpp/build/ -DCMAKE_BUILD_TYPE=Release -DBUILD_SHARED_LIBS=ON -DCMAKE_INSTALL_RPATH='$$ORIGIN' -DCMAKE_BUILD_WITH_INSTALL_RPATH=ON -DGGML_VULKAN=1

|

cmake -S whisper.cpp -B whisper.cpp/build/ -DCMAKE_BUILD_TYPE=Release -DBUILD_SHARED_LIBS=ON -DCMAKE_INSTALL_RPATH='$$ORIGIN' -DCMAKE_BUILD_WITH_INSTALL_RPATH=ON -DGGML_VULKAN=1 -DGGML_NATIVE=OFF

|

||||||

cmake --build whisper.cpp/build -j --config Release --verbose

|

cmake --build whisper.cpp/build -j --config Release --verbose

|

||||||

cp whisper.cpp/build/bin/whisper-cli buzz/whisper_cpp/ || true

|

cp whisper.cpp/build/bin/whisper-cli buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/bin/whisper-server buzz/whisper_cpp/ || true

|

cp whisper.cpp/build/bin/whisper-server buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/src/libwhisper.so buzz/whisper_cpp/ || true

|

cp -P whisper.cpp/build/src/libwhisper.so* buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/src/libwhisper.so.1 buzz/whisper_cpp/ || true

|

cp -P whisper.cpp/build/ggml/src/libggml.so* buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/src/libwhisper.so.1.7.6 buzz/whisper_cpp/ || true

|

cp -P whisper.cpp/build/ggml/src/libggml-base.so* buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/ggml/src/libggml.so buzz/whisper_cpp/ || true

|

cp -P whisper.cpp/build/ggml/src/libggml-cpu.so* buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/ggml/src/libggml-base.so buzz/whisper_cpp/ || true

|

cp -P whisper.cpp/build/ggml/src/ggml-vulkan/libggml-vulkan.so* buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/ggml/src/libggml-cpu.so buzz/whisper_cpp/ || true

|

test -f buzz/whisper_cpp/ggml-silero-v6.2.0.bin || curl -L -o buzz/whisper_cpp/ggml-silero-v6.2.0.bin https://huggingface.co/ggml-org/whisper-vad/resolve/main/ggml-silero-v6.2.0.bin

|

||||||

cp whisper.cpp/build/ggml/src/ggml-vulkan/libggml-vulkan.so buzz/whisper_cpp/ || true

|

|

||||||

endif

|

endif

|

||||||

|

|

||||||

# Build on Macs

|

# Build on Macs

|

||||||

|

|

@ -92,6 +103,7 @@ endif

|

||||||

cp whisper.cpp/build/bin/whisper-server buzz/whisper_cpp/ || true

|

cp whisper.cpp/build/bin/whisper-server buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/src/libwhisper.dylib buzz/whisper_cpp/ || true

|

cp whisper.cpp/build/src/libwhisper.dylib buzz/whisper_cpp/ || true

|

||||||

cp whisper.cpp/build/ggml/src/libggml* buzz/whisper_cpp/ || true

|

cp whisper.cpp/build/ggml/src/libggml* buzz/whisper_cpp/ || true

|

||||||

|

test -f buzz/whisper_cpp/ggml-silero-v6.2.0.bin || curl -L -o buzz/whisper_cpp/ggml-silero-v6.2.0.bin https://huggingface.co/ggml-org/whisper-vad/resolve/main/ggml-silero-v6.2.0.bin

|

||||||

endif

|

endif

|

||||||

|

|

||||||

# Prints all the Mac developer identities used for code signing

|

# Prints all the Mac developer identities used for code signing

|

||||||

|

|

@ -184,26 +196,26 @@ gh_upgrade_pr:

|

||||||

# Internationalization

|

# Internationalization

|

||||||

|

|

||||||

translation_po_all:

|

translation_po_all:

|

||||||

$(MAKE) translation_po locale=en_US

|

|

||||||

$(MAKE) translation_po locale=ca_ES

|

$(MAKE) translation_po locale=ca_ES

|

||||||

$(MAKE) translation_po locale=es_ES

|

|

||||||

$(MAKE) translation_po locale=pl_PL

|

|

||||||

$(MAKE) translation_po locale=zh_CN

|

|

||||||

$(MAKE) translation_po locale=zh_TW

|

|

||||||

$(MAKE) translation_po locale=it_IT

|

|

||||||

$(MAKE) translation_po locale=lv_LV

|

|

||||||

$(MAKE) translation_po locale=uk_UA

|

|

||||||

$(MAKE) translation_po locale=ja_JP

|

|

||||||

$(MAKE) translation_po locale=da_DK

|

$(MAKE) translation_po locale=da_DK

|

||||||

$(MAKE) translation_po locale=de_DE

|

$(MAKE) translation_po locale=de_DE

|

||||||

|

$(MAKE) translation_po locale=en_US

|

||||||

|

$(MAKE) translation_po locale=es_ES

|

||||||

|

$(MAKE) translation_po locale=it_IT

|

||||||

|

$(MAKE) translation_po locale=ja_JP

|

||||||

|

$(MAKE) translation_po locale=lv_LV

|

||||||

$(MAKE) translation_po locale=nl

|

$(MAKE) translation_po locale=nl

|

||||||

|

$(MAKE) translation_po locale=pl_PL

|

||||||

$(MAKE) translation_po locale=pt_BR

|

$(MAKE) translation_po locale=pt_BR

|

||||||

|

$(MAKE) translation_po locale=uk_UA

|

||||||

|

$(MAKE) translation_po locale=zh_CN

|

||||||

|

$(MAKE) translation_po locale=zh_TW

|

||||||

|

|

||||||

TMP_POT_FILE_PATH := $(shell mktemp)

|

TMP_POT_FILE_PATH := $(shell mktemp)

|

||||||

PO_FILE_PATH := buzz/locale/${locale}/LC_MESSAGES/buzz.po

|

PO_FILE_PATH := buzz/locale/${locale}/LC_MESSAGES/buzz.po

|

||||||

translation_po:

|

translation_po:

|

||||||

mkdir -p buzz/locale/${locale}/LC_MESSAGES

|

mkdir -p buzz/locale/${locale}/LC_MESSAGES

|

||||||

xgettext --from-code=UTF-8 -o "${TMP_POT_FILE_PATH}" -l python $(shell find buzz -name '*.py')

|

xgettext --from-code=UTF-8 --add-location=file -o "${TMP_POT_FILE_PATH}" -l python $(shell find buzz -name '*.py')

|

||||||

sed -i.bak 's/CHARSET/UTF-8/' ${TMP_POT_FILE_PATH}

|

sed -i.bak 's/CHARSET/UTF-8/' ${TMP_POT_FILE_PATH}

|

||||||

if [ ! -f ${PO_FILE_PATH} ]; then \

|

if [ ! -f ${PO_FILE_PATH} ]; then \

|

||||||

msginit --no-translator --input=${TMP_POT_FILE_PATH} --output-file=${PO_FILE_PATH}; \

|

msginit --no-translator --input=${TMP_POT_FILE_PATH} --output-file=${PO_FILE_PATH}; \

|

||||||

|

|

|

||||||

98

README.ja_JP.md

Normal file

98

README.ja_JP.md

Normal file

|

|

@ -0,0 +1,98 @@

|

||||||

|

# Buzz

|

||||||

|

|

||||||

|

[ドキュメント](https://chidiwilliams.github.io/buzz/)

|

||||||

|

|

||||||

|

パソコン上でオフラインで音声の文字起こしと翻訳を行います。OpenAIの[Whisper](https://github.com/openai/whisper)を使用しています。

|

||||||

|

|

||||||

|

|

||||||

|

[](https://github.com/chidiwilliams/buzz/actions/workflows/ci.yml)

|

||||||

|

[](https://codecov.io/github/chidiwilliams/buzz)

|

||||||

|

|

||||||

|

[](https://GitHub.com/chidiwilliams/buzz/releases/)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## 機能

|

||||||

|

- 音声・動画ファイルまたはYouTubeリンクの文字起こし

|

||||||

|

- マイクからのリアルタイム音声文字起こし

|

||||||

|

- イベントやプレゼンテーション中に便利なプレゼンテーションウィンドウ

|

||||||

|

- ノイズの多い音声でより高い精度を得るための、文字起こし前の話者分離

|

||||||

|

- 文字起こしメディアでの話者識別

|

||||||

|

- 複数のWhisperバックエンドをサポート

|

||||||

|

- Nvidia GPU向けCUDAアクセラレーション対応

|

||||||

|

- Mac向けApple Silicon対応

|

||||||

|

- Whisper.cppでのVulkanアクセラレーション対応(統合GPUを含むほとんどのGPUで利用可能)

|

||||||

|

- TXT、SRT、VTT形式での文字起こしエクスポート

|

||||||

|

- 検索、再生コントロール、速度調整機能を備えた高度な文字起こしビューア

|

||||||

|

- 効率的なナビゲーションのためのキーボードショートカット

|

||||||

|

- 新しいファイルの自動文字起こしのための監視フォルダ

|

||||||

|

- スクリプトや自動化のためのコマンドラインインターフェース

|

||||||

|

|

||||||

|

## インストール

|

||||||

|

|

||||||

|

### macOS

|

||||||

|

|

||||||

|

[SourceForge](https://sourceforge.net/projects/buzz-captions/files/)から`.dmg`ファイルをダウンロードしてください。

|

||||||

|

|

||||||

|

### Windows

|

||||||

|

|

||||||

|

[SourceForge](https://sourceforge.net/projects/buzz-captions/files/)からインストールファイルを入手してください。

|

||||||

|

|

||||||

|

アプリは署名されていないため、インストール時に警告が表示されます。`詳細情報` -> `実行`を選択してください。

|

||||||

|

|

||||||

|

### Linux

|

||||||

|

|

||||||

|

Buzzは[Flatpak](https://flathub.org/apps/io.github.chidiwilliams.Buzz)または[Snap](https://snapcraft.io/buzz)として利用可能です。

|

||||||

|

|

||||||

|

Flatpakをインストールするには、以下を実行してください:

|

||||||

|

```shell

|

||||||

|

flatpak install flathub io.github.chidiwilliams.Buzz

|

||||||

|

```

|

||||||

|

|

||||||

|

[](https://flathub.org/en/apps/io.github.chidiwilliams.Buzz)

|

||||||

|

|

||||||

|

Snapをインストールするには、以下を実行してください:

|

||||||

|

```shell

|

||||||

|

sudo apt-get install libportaudio2 libcanberra-gtk-module libcanberra-gtk3-module

|

||||||

|

sudo snap install buzz

|

||||||

|

```

|

||||||

|

|

||||||

|

[](https://snapcraft.io/buzz)

|

||||||

|

|

||||||

|

### PyPI

|

||||||

|

|

||||||

|

[ffmpeg](https://www.ffmpeg.org/download.html)をインストールしてください。

|

||||||

|

|

||||||

|

Python 3.12環境を使用していることを確認してください。

|

||||||

|

|

||||||

|

Buzzをインストール

|

||||||

|

|

||||||

|

```shell

|

||||||

|

pip install buzz-captions

|

||||||

|

python -m buzz

|

||||||

|

```

|

||||||

|

|

||||||

|

**PyPIでのGPUサポート**

|

||||||

|

|

||||||

|

PyPIでインストールしたバージョンでWindows上のNvidia GPUのGPUサポートを有効にするには、[torch](https://pytorch.org/get-started/locally/)のCUDAサポートを確認してください。

|

||||||

|

|

||||||

|

```

|

||||||

|

pip3 install -U torch==2.8.0+cu129 torchaudio==2.8.0+cu129 --index-url https://download.pytorch.org/whl/cu129

|

||||||

|

pip3 install nvidia-cublas-cu12==12.9.1.4 nvidia-cuda-cupti-cu12==12.9.79 nvidia-cuda-runtime-cu12==12.9.79 --extra-index-url https://pypi.ngc.nvidia.com

|

||||||

|

```

|

||||||

|

|

||||||

|

### 最新開発版

|

||||||

|

|

||||||

|

最新の機能やバグ修正を含む最新開発版の入手方法については、[FAQ](https://chidiwilliams.github.io/buzz/docs/faq#9-where-can-i-get-latest-development-version)をご覧ください。

|

||||||

|

|

||||||

|

### スクリーンショット

|

||||||

|

|

||||||

|

<div style="display: flex; flex-wrap: wrap;">

|

||||||

|

<img alt="ファイルインポート" src="share/screenshots/buzz-1-import.png" style="max-width: 18%; margin-right: 1%;" />

|

||||||

|

<img alt="メイン画面" src="share/screenshots/buzz-2-main_screen.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

|

<img alt="設定" src="share/screenshots/buzz-3-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

|

<img alt="モデル設定" src="share/screenshots/buzz-3.2-model-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

|

<img alt="文字起こし" src="share/screenshots/buzz-4-transcript.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

|

<img alt="ライブ録音" src="share/screenshots/buzz-5-live_recording.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

|

<img alt="リサイズ" src="share/screenshots/buzz-6-resize.png" style="max-width: 18%;" />

|

||||||

|

</div>

|

||||||

104

README.md

104

README.md

|

|

@ -2,7 +2,7 @@

|

||||||

|

|

||||||

# Buzz

|

# Buzz

|

||||||

|

|

||||||

[Documentation](https://chidiwilliams.github.io/buzz/) | [Buzz Captions on the App Store](https://apps.apple.com/us/app/buzz-captions/id6446018936?mt=12&itsct=apps_box_badge&itscg=30200)

|

[Documentation](https://chidiwilliams.github.io/buzz/)

|

||||||

|

|

||||||

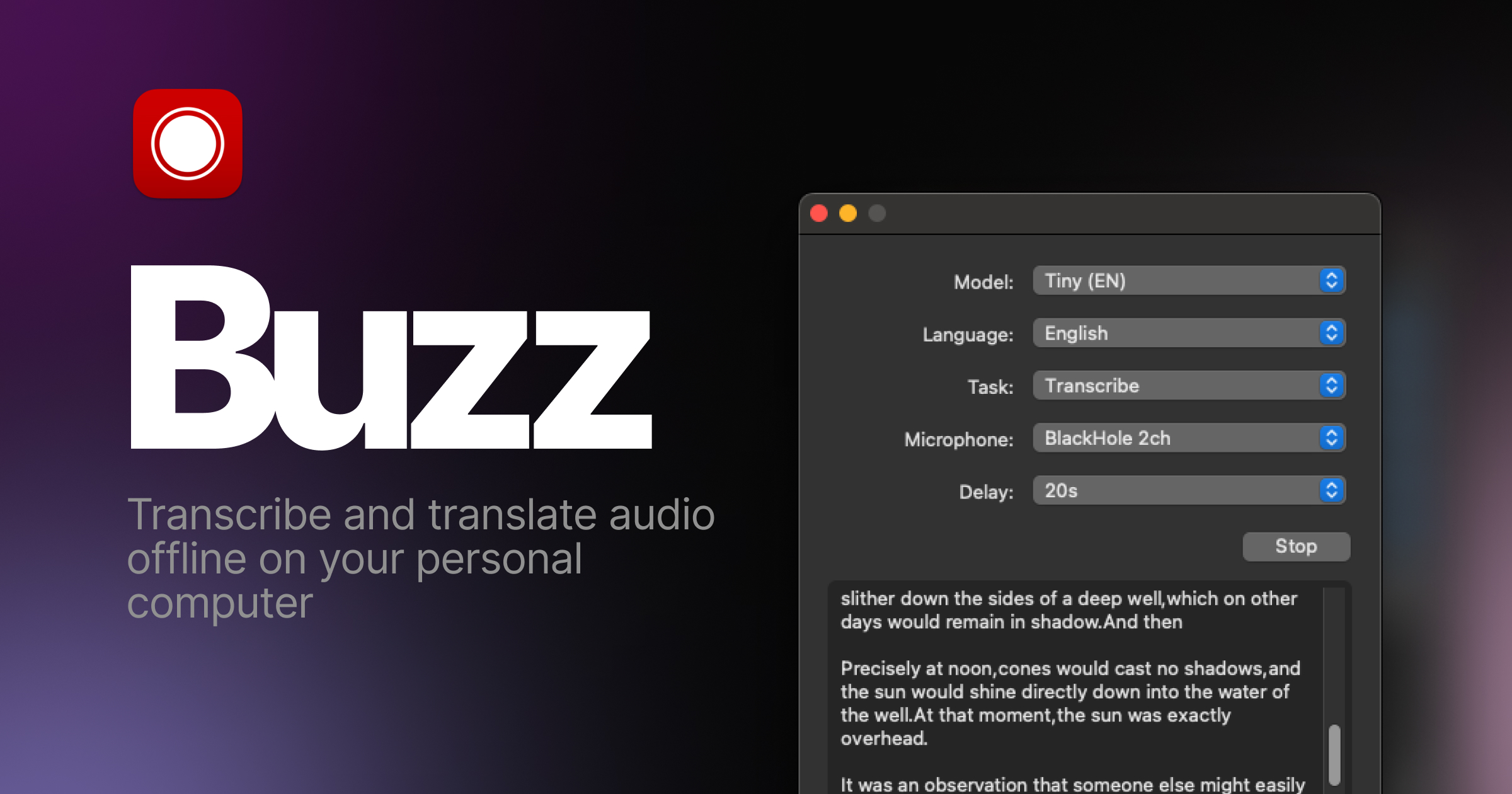

Transcribe and translate audio offline on your personal computer. Powered by

|

Transcribe and translate audio offline on your personal computer. Powered by

|

||||||

OpenAI's [Whisper](https://github.com/openai/whisper).

|

OpenAI's [Whisper](https://github.com/openai/whisper).

|

||||||

|

|

@ -13,57 +13,36 @@ OpenAI's [Whisper](https://github.com/openai/whisper).

|

||||||

|

|

||||||

[](https://GitHub.com/chidiwilliams/buzz/releases/)

|

[](https://GitHub.com/chidiwilliams/buzz/releases/)

|

||||||

|

|

||||||

<blockquote>

|

|

||||||

<p>Buzz is better on the App Store. Get a Mac-native version of Buzz with a cleaner look, audio playback, drag-and-drop import, transcript editing, search, and much more.</p>

|

|

||||||

<a href="https://apps.apple.com/us/app/buzz-captions/id6446018936?mt=12&itsct=apps_box_badge&itscg=30200"><img src="https://toolbox.marketingtools.apple.com/api/badges/download-on-the-mac-app-store/black/en-us?size=250x83&releaseDate=1679529600" alt="Download on the Mac App Store" /></a>

|

|

||||||

</blockquote>

|

|

||||||

|

|

||||||

|

## Features

|

||||||

|

- Transcribe audio and video files or Youtube links

|

||||||

|

- Live realtime audio transcription from microphone

|

||||||

|

- Presentation window for easy accessibility during events and presentations

|

||||||

|

- Speech separation before transcription for better accuracy on noisy audio

|

||||||

|

- Speaker identification in transcribed media

|

||||||

|

- Multiple whisper backend support

|

||||||

|

- CUDA acceleration support for Nvidia GPUs

|

||||||

|

- Apple Silicon support for Macs

|

||||||

|

- Vulkan acceleration support for Whisper.cpp on most GPUs, including integrated GPUs

|

||||||

|

- Export transcripts to TXT, SRT, and VTT

|

||||||

|

- Advanced Transcription Viewer with search, playback controls, and speed adjustment

|

||||||

|

- Keyboard shortcuts for efficient navigation

|

||||||

|

- Watch folder for automatic transcription of new files

|

||||||

|

- Command-Line Interface for scripting and automation

|

||||||

|

|

||||||

## Installation

|

## Installation

|

||||||

|

|

||||||

### PyPI

|

|

||||||

|

|

||||||

Install [ffmpeg](https://www.ffmpeg.org/download.html)

|

|

||||||

|

|

||||||

Install Buzz

|

|

||||||

|

|

||||||

```shell

|

|

||||||

pip install buzz-captions

|

|

||||||

python -m buzz

|

|

||||||

```

|

|

||||||

|

|

||||||

### macOS

|

### macOS

|

||||||

|

|

||||||

Install with [brew utility](https://brew.sh/)

|

Download the `.dmg` from the [SourceForge](https://sourceforge.net/projects/buzz-captions/files/).

|

||||||

|

|

||||||

```shell

|

|

||||||

brew install --cask buzz

|

|

||||||

```

|

|

||||||

|

|

||||||

Or download the `.dmg` from the [releases page](https://github.com/chidiwilliams/buzz/releases/latest).

|

|

||||||

|

|

||||||

### Windows

|

### Windows

|

||||||

|

|

||||||

Download and run the `.exe` from the [releases page](https://github.com/chidiwilliams/buzz/releases/latest).

|

Get the installation files from the [SourceForge](https://sourceforge.net/projects/buzz-captions/files/).

|

||||||

|

|

||||||

App is not signed, you will get a warning when you install it. Select `More info` -> `Run anyway`.

|

App is not signed, you will get a warning when you install it. Select `More info` -> `Run anyway`.

|

||||||

|

|

||||||

**Alternatively, install with [winget](https://learn.microsoft.com/en-us/windows/package-manager/winget/)**

|

|

||||||

|

|

||||||

```shell

|

|

||||||

winget install ChidiWilliams.Buzz

|

|

||||||

```

|

|

||||||

|

|

||||||

**GPU support for PyPI**

|

|

||||||

|

|

||||||

To have GPU support for Nvidia GPUS on Windows, for PyPI installed version ensure, CUDA support for [torch](https://pytorch.org/get-started/locally/)

|

|

||||||

|

|

||||||

```

|

|

||||||

pip3 install -U torch==2.7.1+cu128 torchaudio==2.7.1+cu128 --index-url https://download.pytorch.org/whl/cu128

|

|

||||||

pip3 install nvidia-cublas-cu12==12.8.3.14 nvidia-cuda-cupti-cu12==12.8.57 nvidia-cuda-nvrtc-cu12==12.8.61 nvidia-cuda-runtime-cu12==12.8.57 nvidia-cudnn-cu12==9.7.1.26 nvidia-cufft-cu12==11.3.3.41 nvidia-curand-cu12==10.3.9.55 nvidia-cusolver-cu12==11.7.2.55 nvidia-cusparse-cu12==12.5.4.2 nvidia-cusparselt-cu12==0.6.3 nvidia-nvjitlink-cu12==12.8.61 nvidia-nvtx-cu12==12.8.55 --extra-index-url https://pypi.ngc.nvidia.com

|

|

||||||

```

|

|

||||||

|

|

||||||

### Linux

|

### Linux

|

||||||

|

|

||||||

Buzz is available as a [Flatpak](https://flathub.org/apps/io.github.chidiwilliams.Buzz) or a [Snap](https://snapcraft.io/buzz).

|

Buzz is available as a [Flatpak](https://flathub.org/apps/io.github.chidiwilliams.Buzz) or a [Snap](https://snapcraft.io/buzz).

|

||||||

|

|

@ -73,26 +52,55 @@ To install flatpak, run:

|

||||||

flatpak install flathub io.github.chidiwilliams.Buzz

|

flatpak install flathub io.github.chidiwilliams.Buzz

|

||||||

```

|

```

|

||||||

|

|

||||||

|

[](https://flathub.org/en/apps/io.github.chidiwilliams.Buzz)

|

||||||

|

|

||||||

To install snap, run:

|

To install snap, run:

|

||||||

```shell

|

```shell

|

||||||

sudo apt-get install libportaudio2 libcanberra-gtk-module libcanberra-gtk3-module

|

sudo apt-get install libportaudio2 libcanberra-gtk-module libcanberra-gtk3-module

|

||||||

sudo snap install buzz

|

sudo snap install buzz

|

||||||

sudo snap connect buzz:password-manager-service

|

```

|

||||||

|

|

||||||

|

[](https://snapcraft.io/buzz)

|

||||||

|

|

||||||

|

### PyPI

|

||||||

|

|

||||||

|

Install [ffmpeg](https://www.ffmpeg.org/download.html)

|

||||||

|

|

||||||

|

Ensure you use Python 3.12 environment.

|

||||||

|

|

||||||

|

Install Buzz

|

||||||

|

|

||||||

|

```shell

|

||||||

|

pip install buzz-captions

|

||||||

|

python -m buzz

|

||||||

|

```

|

||||||

|

|

||||||

|

**GPU support for PyPI**

|

||||||

|

|

||||||

|

To have GPU support for Nvidia GPUS on Windows, for PyPI installed version ensure, CUDA support for [torch](https://pytorch.org/get-started/locally/)

|

||||||

|

|

||||||

|

```

|

||||||

|

pip3 install -U torch==2.8.0+cu129 torchaudio==2.8.0+cu129 --index-url https://download.pytorch.org/whl/cu129

|

||||||

|

pip3 install nvidia-cublas-cu12==12.9.1.4 nvidia-cuda-cupti-cu12==12.9.79 nvidia-cuda-runtime-cu12==12.9.79 --extra-index-url https://pypi.ngc.nvidia.com

|

||||||

```

|

```

|

||||||

|

|

||||||

### Latest development version

|

### Latest development version

|

||||||

|

|

||||||

For info on how to get latest development version with latest features and bug fixes see [FAQ](https://chidiwilliams.github.io/buzz/docs/faq#9-where-can-i-get-latest-development-version).

|

For info on how to get latest development version with latest features and bug fixes see [FAQ](https://chidiwilliams.github.io/buzz/docs/faq#9-where-can-i-get-latest-development-version).

|

||||||

|

|

||||||

|

### Support Buzz

|

||||||

|

|

||||||

|

You can help the Buzz by starring 🌟 the repo and sharing it with your friends.

|

||||||

|

|

||||||

### Screenshots

|

### Screenshots

|

||||||

|

|

||||||

<div style="display: flex; flex-wrap: wrap;">

|

<div style="display: flex; flex-wrap: wrap;">

|

||||||

<img alt="File import" src="share/screenshots/buzz-1-import.png" style="max-width: 18%; margin-right: 1%;" />

|

<img alt="File import" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-1-import.png" style="max-width: 18%; margin-right: 1%;" />

|

||||||

<img alt="Main screen" src="share/screenshots/buzz-2-main_screen.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

<img alt="Main screen" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-2-main_screen.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

<img alt="Preferences" src="share/screenshots/buzz-3-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

<img alt="Preferences" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-3-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

<img alt="Model preferences" src="share/screenshots/buzz-3.2-model-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

<img alt="Model preferences" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-3.2-model-preferences.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

<img alt="Transcript" src="share/screenshots/buzz-4-transcript.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

<img alt="Transcript" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-4-transcript.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

<img alt="Live recording" src="share/screenshots/buzz-5-live_recording.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

<img alt="Live recording" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-5-live_recording.png" style="max-width: 18%; margin-right: 1%; height:auto;" />

|

||||||

<img alt="Resize" src="share/screenshots/buzz-6-resize.png" style="max-width: 18%;" />

|

<img alt="Resize" src="https://github.com/chidiwilliams/buzz/raw/main/share/screenshots/buzz-6-resize.png" style="max-width: 18%;" />

|

||||||

</div>

|

</div>

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -1 +1 @@

|

||||||

VERSION = "1.3.2"

|

VERSION = "1.4.4"

|

||||||

|

|

|

||||||

6

buzz/assets/icons/color-background.svg

Normal file

6

buzz/assets/icons/color-background.svg

Normal file

|

|

@ -0,0 +1,6 @@

|

||||||

|

<?xml version="1.0" encoding="utf-8"?><!-- Uploaded to: SVG Repo, www.svgrepo.com, Generator: SVG Repo Mixer Tools -->

|

||||||

|

<svg width="800px" height="800px" viewBox="0 0 24 24" fill="none" xmlns="http://www.w3.org/2000/svg">

|

||||||

|

<path d="M6.75 1C6.33579 1 6 1.33579 6 1.75V3.50559C5.96824 3.53358 5.93715 3.56276 5.9068 3.59311L1.66416 7.83575C0.883107 8.6168 0.883107 9.88313 1.66416 10.6642L5.19969 14.1997C5.98074 14.9808 7.24707 14.9808 8.02812 14.1997L12.2708 9.95707C13.0518 9.17602 13.0518 7.90969 12.2708 7.12864L8.73522 3.59311C8.39027 3.24816 7.95066 3.05555 7.5 3.0153V1.75C7.5 1.33579 7.16421 1 6.75 1ZM6 5.62123V6.25C6 6.66421 6.33579 7 6.75 7C7.16421 7 7.5 6.66421 7.5 6.25V4.54033C7.56363 4.56467 7.62328 4.60249 7.67456 4.65377L11.2101 8.1893C11.2995 8.27875 11.348 8.39366 11.3555 8.51071H3.11052L6 5.62123ZM6.26035 13.1391L3.132 10.0107H10.0958L6.96746 13.1391C6.77219 13.3343 6.45561 13.3343 6.26035 13.1391Z" fill="#212121"/>

|

||||||

|

<path d="M2 17.5V12.4143L3.5 13.9143V17.5C3.5 18.0523 3.94772 18.5 4.5 18.5H19.5C20.0523 18.5 20.5 18.0523 20.5 17.5V6.5C20.5 5.94771 20.0523 5.5 19.5 5.5H12.0563L10.5563 4H19.5C20.8807 4 22 5.11929 22 6.5V17.5C22 18.8807 20.8807 20 19.5 20H4.5C3.11929 20 2 18.8807 2 17.5Z" fill="#212121"/>

|

||||||

|

<path d="M11 14.375C11 13.8816 11.1541 13.4027 11.3418 12.9938C11.5325 12.5784 11.7798 12.1881 12.0158 11.8595C12.2531 11.5289 12.4888 11.247 12.6647 11.0481C12.7502 10.9515 12.9062 10.7867 12.9642 10.7254L12.9697 10.7197C13.2626 10.4268 13.7374 10.4268 14.0303 10.7197L14.3353 11.0481C14.5112 11.247 14.7469 11.5289 14.9842 11.8595C15.2202 12.1881 15.4675 12.5784 15.6582 12.9938C15.8459 13.4027 16 13.8816 16 14.375C16 15.7654 14.9711 17 13.5 17C12.0289 17 11 15.7654 11 14.375ZM13.7658 12.7343C13.676 12.6092 13.5858 12.4916 13.5 12.3844C13.4142 12.4916 13.324 12.6092 13.2342 12.7343C13.0327 13.015 12.8425 13.32 12.7051 13.6195C12.5647 13.9253 12.5 14.1808 12.5 14.375C12.5 15.0663 12.9809 15.5 13.5 15.5C14.0191 15.5 14.5 15.0663 14.5 14.375C14.5 14.1808 14.4353 13.9253 14.2949 13.6195C14.1575 13.32 13.9673 13.015 13.7658 12.7343Z" fill="#212121"/>

|

||||||

|

</svg>

|

||||||

|

After Width: | Height: | Size: 2 KiB |

5

buzz/assets/icons/fullscreen.svg

Normal file

5

buzz/assets/icons/fullscreen.svg

Normal file

|

|

@ -0,0 +1,5 @@

|

||||||

|

<?xml version="1.0" encoding="utf-8"?><!-- Uploaded to: SVG Repo, www.svgrepo.com, Generator: SVG Repo Mixer Tools -->

|

||||||

|

<svg width="800px" height="800px" viewBox="0 0 24 24" fill="none" xmlns="http://www.w3.org/2000/svg">

|

||||||

|

<path d="M21.7092 2.29502C21.8041 2.3904 21.8757 2.50014 21.9241 2.61722C21.9727 2.73425 21.9996 2.8625 22 2.997L22 3V9C22 9.55228 21.5523 10 21 10C20.4477 10 20 9.55228 20 9V5.41421L14.7071 10.7071C14.3166 11.0976 13.6834 11.0976 13.2929 10.7071C12.9024 10.3166 12.9024 9.68342 13.2929 9.29289L18.5858 4H15C14.4477 4 14 3.55228 14 3C14 2.44772 14.4477 2 15 2H20.9998C21.2749 2 21.5242 2.11106 21.705 2.29078L21.7092 2.29502Z" fill="#000000"/>

|

||||||

|

<path d="M10.7071 14.7071L5.41421 20H9C9.55228 20 10 20.4477 10 21C10 21.5523 9.55228 22 9 22H3.00069L2.997 22C2.74301 21.9992 2.48924 21.9023 2.29502 21.7092L2.29078 21.705C2.19595 21.6096 2.12432 21.4999 2.07588 21.3828C2.02699 21.2649 2 21.1356 2 21V15C2 14.4477 2.44772 14 3 14C3.55228 14 4 14.4477 4 15V18.5858L9.29289 13.2929C9.68342 12.9024 10.3166 12.9024 10.7071 13.2929C11.0976 13.6834 11.0976 14.3166 10.7071 14.7071Z" fill="#000000"/>

|

||||||

|

</svg>

|

||||||

|

After Width: | Height: | Size: 1.1 KiB |

2

buzz/assets/icons/gui-text-color.svg

Normal file

2

buzz/assets/icons/gui-text-color.svg

Normal file

|

|

@ -0,0 +1,2 @@

|

||||||

|

<?xml version="1.0" encoding="utf-8"?><!-- Uploaded to: SVG Repo, www.svgrepo.com, Generator: SVG Repo Mixer Tools -->

|

||||||

|

<svg fill="#000000" width="800px" height="800px" viewBox="0 0 14 14" role="img" focusable="false" aria-hidden="true" xmlns="http://www.w3.org/2000/svg"><path d="M 7.5291661,11.795909 C 7.4168129,11.419456 7.3406864,10.225625 7.3406864,9.29222 c 0,-0.11438 -0.029767,-0.221667 -0.081573,-0.314893 0.051933,-0.115773 0.08132,-0.24358 0.08132,-0.378226 l 0,-1.709364 c 0,-0.511733 -0.416226,-0.927959 -0.9279585,-0.927959 l -0.8772919,0 C 5.527203,5.856265 5.52163,5.751005 5.518336,5.648406 5.514666,5.556066 5.513396,5.470313 5.513016,5.385826 5.511876,5.296776 5.5132694,5.224073 5.517196,5.160866 5.524666,5.024193 5.541009,4.891827 5.565076,4.773647 5.591043,4.646981 5.619669,4.564774 5.630689,4.535134 c 0.0019,-0.0052 0.0038,-0.01013 0.00557,-0.01533 0.00709,-0.02039 0.0133,-0.03559 0.017227,-0.04446 C 6.0127121,3.789698 5.750766,2.938499 5.0665137,2.5737 4.8642273,2.466034 4.6367344,2.409034 4.4084814,2.408147 4.1801018,2.409034 3.9526089,2.466037 3.7504492,2.5737 3.066197,2.938499 2.8042508,3.789698 3.1634768,4.475344 c 0.00393,0.0087 0.01026,0.02394 0.017227,0.04446 0.00177,0.0052 0.00367,0.01013 0.00557,0.01533 0.01102,0.02951 0.039647,0.111847 0.065613,0.238513 0.024067,0.11818 0.040533,0.250546 0.04788,0.387219 0.00393,0.06321 0.00532,0.135914 0.00418,0.22496 -5.066e-4,0.08449 -0.00165,0.17024 -0.00532,0.26258 -0.00329,0.102599 -0.00887,0.207859 -0.016847,0.313372 l -0.8772919,0 c -0.5117324,0 -0.9279584,0.416226 -0.9279584,0.927959 l 0,1.709364 c 0,0.134646 0.029387,0.262453 0.08132,0.378226 -0.051807,0.09323 -0.081573,0.200513 -0.081573,0.314893 0,0.933278 -0.076126,2.127236 -0.1884796,2.503689 C 1.0571435,11.985782 1.0131902,12.254315 1.0562568,12.453434 1.1748167,13 1.7477291,13 1.9359554,13 c 0.437506,0 1.226258,-0.07676 1.2595712,-0.08005 0.05092,-0.0051 0.1001932,-0.01596 0.1468065,-0.03179 0.049907,0.01241 0.1018398,0.01913 0.1546597,0.01925 l 0.9114918,0.0044 0.9114918,-0.0044 c 0.05282,-1.27e-4 0.1047532,-0.007 0.1546598,-0.01925 0.046613,0.01583 0.095886,0.02673 0.1468064,0.03179 C 5.6547556,12.92315 6.4436346,13 6.8810138,13 c 0.1882264,0 0.7612654,0 0.8796986,-0.546566 0.043067,-0.199119 -7.6e-4,-0.467652 -0.2315463,-0.657525 z m -1.833117,0.502486 -0.3480794,-1.518478 -0.1741664,1.503658 -1.6846638,-7.6e-4 -0.3680927,-0.885399 0,0.900979 c 0,0 -1.7672504,0.173279 -1.3861111,0 0.3811394,-0.173154 0.3811394,-2.980082 0.3811394,-2.980082 l 2.2924095,0 2.2924095,0 c 0,0 0,2.806928 0.3811394,2.980082 0.381266,0.173279 -1.3859844,0 -1.3859844,0 z M 10.219055,1 7.3387864,1 5.8932688,5.377719 l 0.9449318,0 c 0.3536527,0 0.6674055,0.17138 0.8650052,0.434593 l 0.04864,-0.18392 0.9107318,-2.702555 0.2962729,-0.0016 0.9543051,2.889769 -2.2085564,0 C 7.839499,5.994632 7.9204389,6.217692 7.9204389,6.459878 l 0,1.257038 2.3962751,0 0.423193,1.60917 2.218563,0 L 10.219055,1 Z"/></svg>

|

||||||

|

After Width: | Height: | Size: 2.9 KiB |

7

buzz/assets/icons/new-window.svg

Normal file

7

buzz/assets/icons/new-window.svg

Normal file

|

|

@ -0,0 +1,7 @@

|

||||||

|

<?xml version="1.0" encoding="utf-8"?><!-- Uploaded to: SVG Repo, www.svgrepo.com, Generator: SVG Repo Mixer Tools -->

|

||||||

|

<svg width="800px" height="800px" viewBox="-0.5 0 25 25" fill="none" xmlns="http://www.w3.org/2000/svg">

|

||||||

|

<path d="M8.93994 9.39998V5.48999C8.93994 5.20999 9.15994 4.98999 9.43994 4.98999H20.9999C21.2799 4.98999 21.4999 5.20999 21.4999 5.48999V13.09C21.4999 13.37 21.2799 13.59 20.9999 13.59L17.0599 13.6" stroke="#0F0F0F" stroke-miterlimit="10" stroke-linecap="round" stroke-linejoin="round"/>

|

||||||

|